Image: DALL-E 2 requested by MIXED

Der artikel kann nur mit aktiviertem JavaScript dargestellt werden. Bitte aktiviere JavaScript in deinem Browser and lade die Seite neu.

Deepmind’s new AI, Transframer, can handle a whole range of image and video-related tasks and create 30-second videos from a single frame.

Generative AI systems have moved from research labs to industrial and consumer applications in recent years, initiated by OpenAI’s GPT-3 large-scale language model. Last April, the company introduced the DALL-E 2 imaging system, which indirectly spawned alternatives such as Midjourney and Stable Diffusion.

Google’s sister Deepmind is now showing Transframer, an AI model that could offer a glimpse into the next generation of generative AI models.

Deepmind Transframer – a model with many tasks

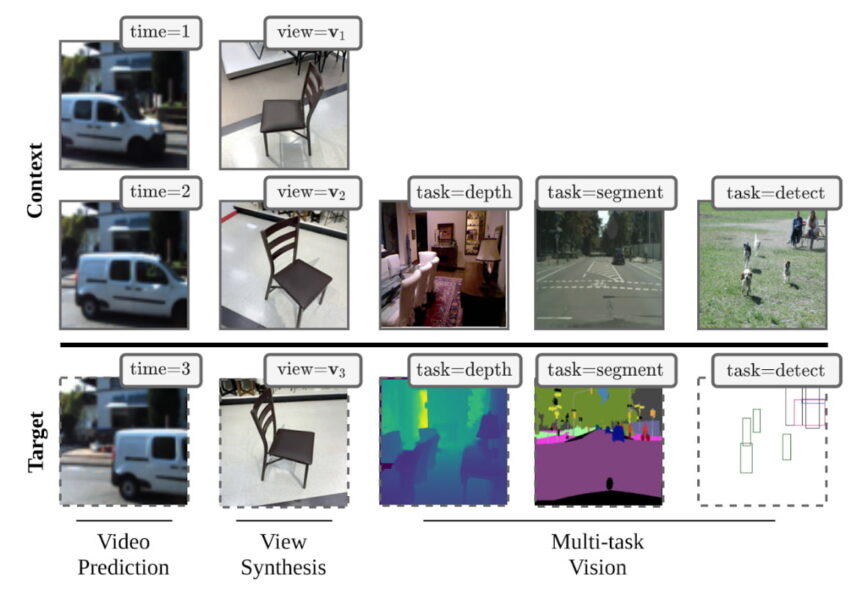

Deepmind’s Transframer is a visual prediction framework capable of solving eight modeling and image processing tasks simultaneously, such as depth estimation, instance segmentation, object recognition or video prediction.

Transframer uses a series of context images with associated annotations such as timestamps or camera views and processes the query for an image based on these.

Transframer provides a framework for multiple image tasks. | Image: deep mind

The model processes compressed images using a U-net whose outputs are passed to a DCTransfromer decoder. Specifically, the images are compressed by DCT (discrete cosine transform); DCT is also used in the JPEG compression method. The DCTransformer specializes in DCT tokens.

Transframer generates new angles and entire videos

In addition to traditional imaging tasks such as depth estimation and object detection, Transframer is also capable of synthesizing new views of an object and predicting video trajectories.

In a short tweet, Deepmind shows about six 30-second videos that Transframer imagined from a single input image. Despite the low resolution, some texture can be seen.

Transframer is a generic generative framework that can handle many image and video tasks in a probabilistic environment. The new work shows it excels at video prediction and view synthesis and can generate 1930s videos from a single image: https://t.co/wX3nrrYEEa 1 / pic.twitter.com/gQk6f9nZyg

– DeepMind (@DeepMind) August 15, 2022

Deepmind says the results show that a framework like Transframer is suitable for demanding image and video modeling tasks. Transframer can also act as a multitasker to solve image and video analysis problems that previously used specialized models, the researchers said.

Note: Links to online shops in articles can be so-called affiliate links. If you buy via this link, MIXED receives a commission from the provider. For you the price does not change.